If you're running your Windows Azure application on your local machine using the Visual Studio Development Fabric, it's easy to figure out problems by simply putting a breakpoint in your code and using the debugger. If your app is running in the cloud, using the debugger is impossible.

This is where Azure's logging features come in handy.

The most straightforward way to get logging into your app is by using the RoleManager object in the Microsoft.ServiceHosting.ServiceRuntime namespace. RoleManager has a static method called WriteToLog that takes a name of the eventLog and the message.

RoleManager.WriteToLog(string eventLogName, string message)

The supported values for eventLogName are Critical, Error, Warning, Information, and Verbose.

Here's a simple sample of writing to the log from the code behind of an ASP.NET page running in an Azure Web Role.

protected void m_lbtnWriteMessageToLog_Click(

object sender, EventArgs e)

{

RoleManager.WriteToLog(

"Information",

String.Format("The time is now: {0}", DateTime.Now));

}

If you want to see the logging in action while you're running in the Development Fabric, locate the Development Fabric icon in your system tray, right-click the icon and choose Show Development Fabric UI.

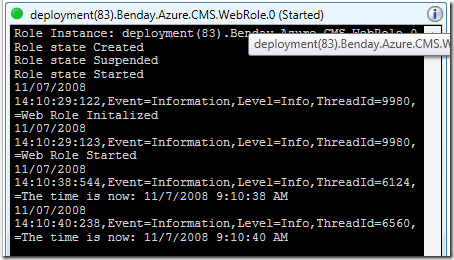

You should now see the Development Fabric window and you can then drill in to locate the role that's doing the logging. In this case, I only have a WebRole and, as you can see in the diagram, it's running two instances.

From here you can drill in to the role instances and see the log window and the log messages.

Now, let's say that you've deployed your app to Staging or Production and it's running in the cloud. You don't have this nice log window anymore so how do you access your Windows Azure logs?

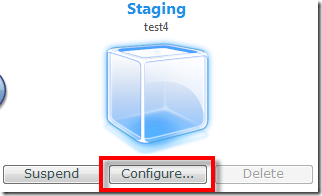

First, you'll log in to the Azure Services Developer Portal for your application. If you've deployed your application, you should see the Production and/or Staging environment displayed along with buttons to Suspend, Configure, and Delete your app.

To access your logs, begin by clicking the Configure button.

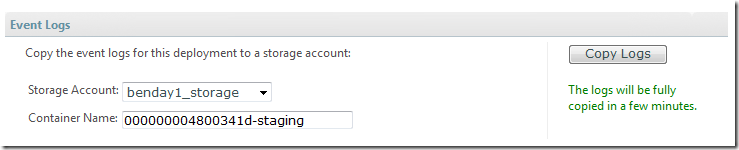

This will bring you in to the Service Tuning page and at the top, you'll see a section that says Event Logs. The Event Logs section of this page will allow you to copy your logs from your application out to a Blob store in your Azure Services Storage Account. You choose the Storage Account you want to use and give it a Container Name (or leave the default auto-generated value), and then click Copy Logs.

The Container Name is the name of the Blob container that will hold your log files. After a few minutes, the log files will be finished copying to your storage account.

Ok. Now what? How do you actually read the logs, right?

To get the logs, you can use the CloudDrive application that comes with the Windows Azure Services SDK. CloudDrive is a PowerShell plugin that allows you to access your blob and queue storage account from the command line. By default, the application source code is stored at C:Program FilesMicrosoft Service Hosting SDKv1.0samplesCloudDrive.

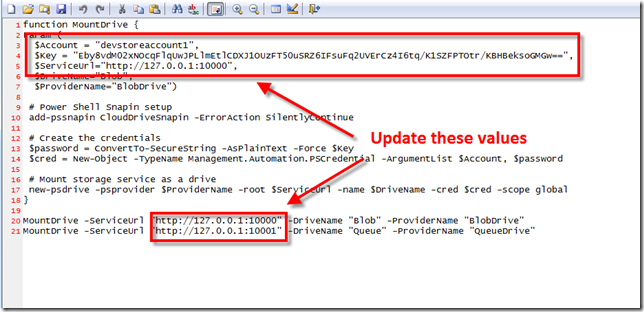

Run the buildme.cmd file to compile the CloudDrive app. Then you need to add your credentials to the MountDrive.ps1 file in the Scripts directory (C:Program FilesMicrosoft Service Hosting SDKv1.0samplesCloudDrivescripts).

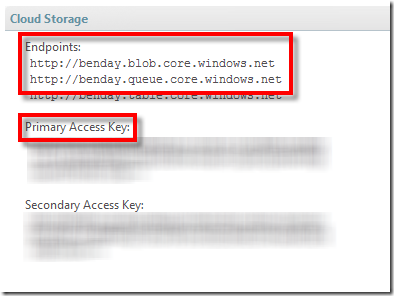

You need to update the values that are circled in the image above. You can get these values by logging into your Azure Services Developer Portal and selecting your Storage account. You should see a section on the page called Cloud Storage that looks similar to the image below.

Open MountDrive.ps1 in a text editor.

At the top of MountDrive.ps1, you need to set the values for $Account and $Key. The $Key value is your Primary Access Key from the Cloud Storage portal. Your account name is the first section of the Endpoint url. The endpoint for my blob store is http://benday.blob.core.windows.net so my account name is benday.

At the bottom of MountDrive.ps1, you need to set the endpoint values for the Blob store and the Queue store. As shown above, the endpoints for my account are http://benday.blob.core.windows.net and http://queue.blob.core.windows.net.

Save MountDrive.ps1 and exit the text editor**.**

You've now finished configuring CloudDrive. You can run it by running runme.cmd in C:Program FilesMicrosoft Service Hosting SDKv1.0samplesCloudDrive.

This will load up PowerShell and show you the configured Azure Storage repositories. (see below)

Now let's retrieve the log files.

Type cd Blob: in to the PowerShell window and hit Enter. (HINT: don't forget the colon (':') after the word Blob and remember that Blob is case-sensitive!)

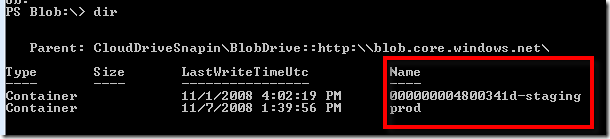

If you type dir, you'll see a list of the Blobs in your store.

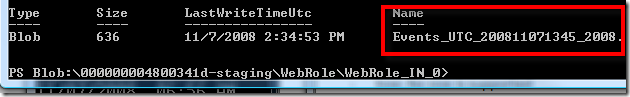

You should see the name of the blob container that you specified in the portal when you clicked the Copy Logs button. Type in "cd name_of_your_blob" and hit enter to get into the container. Continue doing dir and cd commands until you can see a file that starts with Events_UTC.

Now you need to copy this file to your local machine.

Type copy-cd Events_UTC_filename c: and press Enter. This will copy the log file to the root of your C: drive.

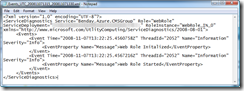

Now you can open the file using notepad and see the contents.

So...what have we accomplished? First, you added some logging calls to your Azure application. Then you viewed the logs while running your app in the Development Fabric. Then you accessed the logs for a hosted service using CloudDrive.

Now you're all set to debug your deployed Azure application.

-Ben

-- Looking for help making decisions about or adopting Windows Azure? Need Windows Azure training or mentoring? Contact me via http://www.benday.com.