How a salon software company had 3,000 automated tests, multiple development teams, and a release process — and still couldn't tell you when anything would be done.

What They Were Experiencing

A salon and spa management software company asked us to help them figure out why everything felt so slow. Their product was a cloud-based platform used by thousands of salons, spas, and wellness businesses — appointment booking, point of sale, client management, reporting, the works. They had real customers, real revenue, and a real competitive position in their market.

On paper, things looked healthy. They had multiple development teams spread across the US and offshore. They had business analysts, product owners, a QA organization. They had approximately 3,000 automated user interface tests built with Selenium. They'd recently started pushing to release more frequently — moving from quarterly releases to every six to eight weeks. They had Azure DevOps for project tracking and builds. They had process.

But leadership couldn't tell how productive the teams actually were. Features took far longer than anyone expected. The manual regression test cycle before each release consumed two full weeks. Customers were telling them the product was too slow to evolve. And underneath all of it, a quiet fatalism had settled over the organization — a feeling that the status quo was locked in and nothing significant would or could change.

They thought they needed help measuring developer productivity. What they actually needed was someone to show them that their entire system — process, architecture, culture — was working against the flow of value.

What They Thought Was Wrong

Leadership believed the core problems were:

- They needed metrics to measure developer productivity

- The manual regression testing cycle was too long

- They needed to release more frequently

- The teams needed to be more efficient

The development teams believed the problems were:

- Requirements took too long and had to be nearly perfect the first time

- There was too much context switching

- The database team was a bottleneck

- Priorities shifted constantly

Both sides were partially right. But neither side could see how all of these problems were connected — or that the thing connecting them was far more fundamental than any single symptom.

What I Actually Found

My colleague and I spent several days interviewing leadership, developers, business analysts, product owners, QA staff, and the database team lead. We also pulled data directly from their Azure DevOps instance and ran the numbers on their actual flow metrics. Here's what emerged.

Finding #1: Seven Months to "Probably"

We pulled every work item from Azure DevOps and calculated the cycle time — the elapsed time from when an item was created until it was marked closed. The results were sobering.

Half of their work items took 29 days or less. That sounds almost reasonable until you look at the other half. Their 85th percentile was 202 days. That means 85% of their work took roughly seven months or less to complete — and 15% took even longer than that.

Said differently: if someone asked "when will this feature be done?", the honest answer based on their actual data was "there's an 85% chance it'll be done within seven months." That's not a useful answer for running a product business.

Their throughput — the number of items completed per day — varied by a factor of at least four to one. With that level of variation, any forecast was essentially a guess. They'd say "ten to forty weeks" when a well-managed team could say "ten to fifteen weeks." The difference between those two answers is the difference between a business that can plan and one that's flying blind.

Finding #2: Ninety-Three Things at Once

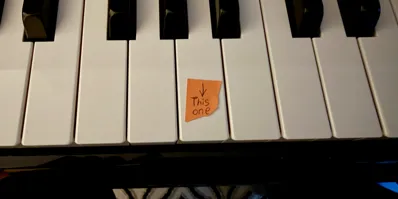

On a single day during our assessment, we ran a script against their Git repository. In the previous seven days, there had been 93 active branches. In the previous thirty days, 246. In total, the repository contained 3,967 branches, the vast majority dead and abandoned.

Ninety-three active branches means that over the course of a single week, the development teams were working on ninety-three separate things simultaneously. This is the clearest possible indicator of a work-in-progress (WIP) explosion.

High WIP is the silent killer of software delivery. Every item in progress is an item consuming attention, creating context switches, generating dependencies, and not delivering value to customers. The research on this is unambiguous: the more things you work on simultaneously, the longer each individual thing takes, and the less total work actually gets completed.

The teams weren't slow because they lacked talent or effort. They were slow because the system was structured to ensure that nobody could focus on anything long enough to finish it.

Finding #3: Three Thousand Tests and a Two-Week Prayer

The company had invested significantly in test automation. Three thousand Selenium-based user interface tests — a genuinely impressive number. And yet they still needed a two-week manual regression cycle before every release. When we asked what would happen if they skipped the regression cycle, the answer was immediate: "We'd get too many bugs."

How can you have 3,000 automated tests and still need two weeks of manual testing?

The answer was that those tests only covered the "happy path" — the scenario where everything goes right. They didn't test error conditions, edge cases, or recovery paths. And more fundamentally, they were all user interface tests. There were essentially zero unit tests in the entire codebase — not in the C# backend, not in the Angular frontend. None.

The company had accidentally built an inverted testing pyramid. The industry standard puts a large foundation of fast, focused unit tests at the bottom, a moderate layer of integration tests in the middle, and a small number of slow, expensive UI tests at the top. This company had it exactly backwards: thousands of slow UI tests, no unit tests, and a two-week manual cycle filling the gap.

The application had been built without any consideration for testability. No dependency injection. No separation of concerns designed for testing. Business logic lived in the UI layer. Business logic also lived in the database — 2,500 stored procedures, some running over a thousand lines of code. The stored procedures alone represented a massive body of critical business logic that was essentially unreachable by any automated test.

The two-week regression cycle wasn't the problem. It was a symptom of an architecture that had never been designed to be verified.

Finding #4: A Database That Had Become a Black Hole

600 Tables, 2,500 Stored Procedures

The relational database was excruciatingly complex: 600 tables, 2,500 stored procedures, 30 to 40 schemas aligned to different feature areas. The database team — twelve people — spent roughly half their time on production support and ad-hoc data extraction rather than building new features.

Heroic Tuning as a Way of Life

Performance was a constant battle. The appointment scheduling screen alone required hardcore SQL tuning to meet a two-second load target, and when it started slipping, customers complained immediately. This kind of heroic tuning effort wasn't isolated — it was happening across the entire application.

Good People Who Couldn't Contribute

During interviews, the database team lead told us something remarkable: they regularly hired people with exemplary database skills who then quit soon after in frustration. These experienced hires would go through a few code reviews, fail to get their SQL to pass review because the schema was so complex, and leave. The codebase had become so intricate that even skilled specialists couldn't contribute to it.

Separate Backlogs, No Shared Priorities

The database had a separate backlog from the application development teams. Database stories were split off from feature stories and handled independently. There was no unified priority list across the teams. The database team's lead told us plainly: they did not have one unified list of priorities for daily work. He was shifting priorities constantly.

The Dependency Chain

Every feature that touched the database — and most features did — created a cross-team dependency. That dependency meant waiting. Waiting meant more WIP. More WIP meant longer cycle times. The database had become a gravity well that pulled every feature request into a slower orbit.

Finding #5: "We Only Get One Shot at It"

We heard this sentiment from multiple groups: "We only get one shot at it, so the requirements need to be nearly perfect, and that takes a lot of time."

This single statement reveals an entire worldview about how software development works — and it's a worldview that was making everything worse.

When your release cycle is six to eight weeks, and you treat each release as a single chance to get things right, the pressure on requirements becomes enormous. Business analysts spent weeks refining stories. Product owners reviewed requirements documents that read like contracts rather than conversations. Acceptance criteria were written by BAs in isolation — one analyst told us there was "absolutely no interaction with the PO" when writing acceptance criteria. The POs were asked to review but never responded. The teams needed to read minds on the first attempt.

And when those painstakingly detailed requirements finally reached the development teams, the stories were either too big (one analyst described them as "books") or too small to be meaningful. The product team was doing what one interviewee called "paper architecture" — suggesting or forcing implementation choices on the teams before a single line of code was written.

All of this ceremony existed because the organization believed that getting requirements wrong was catastrophically expensive. And with six-to-eight-week release cycles and a two-week regression gate, they were right — rework was catastrophically expensive. But the solution wasn't more upfront analysis. The solution was shorter cycles with faster feedback, so that getting something slightly wrong became cheap and correctable rather than a disaster.

Finding #6: "Are We Being Sold?"

We heard from multiple people across multiple teams that the plan was to sell the company in the coming years. Whether or not this was official, the belief had taken hold — and it had injected a looming fatalism into the entire organization.

People framed it as a tension between "doing it right" and "doing it right now." Statements like "it's hard to think about how to go faster in the future when it's clear onboarding new customers is the number one priority today, and tomorrow" came up repeatedly. The subtext was: why invest in process improvement or technical debt reduction if the whole thing is going to change hands anyway?

This wasn't anger. It wasn't rebellion. It was something more corrosive: resignation. The teams had concluded that the status quo was locked in and that nothing significant would or could change. They had good ideas for improvement — they'd identified the manual regression problem, the database dependencies, the need to get product owners closer to teams — but there was no space to act on any of it.

Leadership actually did care about improvement. But the message hadn't landed. The teams needed to hear — explicitly, repeatedly, from the top — that process improvement was a priority regardless of what the future held. Without that message, every conversation about "doing things better" felt academic.

Finding #7: Everything Was Connected to Everything

The individual findings tell a story. But the real insight was seeing how they reinforced each other into a system that resisted improvement.

Large batch sizes (six to eight weeks of work) meant the stakes for each release were high. High stakes meant requirements had to be exhaustively detailed upfront. Exhaustive requirements meant features started later. Late starts meant multiple features hit development simultaneously. Simultaneous features meant high WIP. High WIP meant constant context switching. Context switching meant everything took longer. Longer cycle times meant releases felt even more high-stakes. And the cycle continued.

Meanwhile, the two-week regression gate acted as a dam. Everything piled up behind it. The only way to get anything to customers was to batch it all together, push it through the dam, and hope. The dam existed because there were no unit tests. There were no unit tests because the code hadn't been designed for testability. The code hadn't been designed for testability because nobody had ever made that a priority — and now the codebase was so large and complex that adding tests felt impossible.

And underneath all of it, a quiet conviction that none of this would change anyway.

The Diagnosis

I told them something that surprised them: I could actually see what was wrong with their process from the outside, faster than their own teams could articulate it — not because their teams weren't smart, but because the people inside the system couldn't see the system. They were too close to the implementation and too far from the patterns.

Their company was like a coffee shop that had taken hundreds of pre-orders for custom drinks, assigned baristas to make dozens of drinks simultaneously while tracking where they were in each one, required each barista to ask other baristas for ingredients, and then — even as some drinks were finished — waited six to eight weeks to do a final quality check before delivering any of them to customers. All while the manager asked how they could serve more customers.

The answer was what coffee shops and manufacturing and every other industry that moves physical goods has known for decades: limit your work in progress, reduce your batch size, and actively manage the flow through the system.

The 93 active branches weren't a Git problem. They were a photograph of an organization that couldn't say "not yet" to anything — not to features, not to edge cases, not to customization requests, not to shifting priorities. The result was a product that the CEO himself described as "overly complex — we've gone after every edge case imaginable, and that makes it easy to break lots of stuff."

What We Recommended

We structured the recommendations in two phases: stabilize the process first, then address the technical debt.

Adopt Scrum — for real, across the entire organization. They were practicing almost none of the Scrum framework despite using some of its vocabulary. No focus or clear priorities. No Definition of Done. No proper Sprint Reviews. No Retrospectives. Daily standups that were too long and not useful. Getting the discipline of short sprints, cross-functional teams, and continuous improvement in place would force visibility into every other problem.

Address the "are we being sold?" question directly. Leadership needed to explicitly communicate that process improvement mattered regardless of the company's future ownership. Without that message, no change initiative would get traction. The teams needed to hear it, believe it, and see it reinforced through action.

Start measuring and managing flow. Install flow metrics tooling. Make cycle time and throughput visible to everyone. Use the data to have honest conversations about capacity, forecasting, and WIP limits. Stop trying to plan capacity through prediction and start using empirical evidence from what the teams actually delivered.

Limit work in progress aggressively. This was the single highest-leverage change available. Reducing the number of simultaneous work items would decrease cycle times, reduce context switching, and make the teams measurably more productive without adding a single person.

Start adding unit tests — but not randomly. Adopt a policy: new code gets tests, modified code gets tests, bug fixes start with a failing test. Don't try to retroactively test the entire codebase. Focus testing effort on code that's actively delivering business value — anything that gets created or changes gets a test. Over time, this would build a safety net that could start replacing the two-week regression cycle.

Begin migrating data out of the relational database where it doesn't need to be relational. Not everything in those 600 tables needed the constraints and complexity of SQL Server. Identifying data that could move to a NoSQL option would reduce the database team's burden and break the dependency bottleneck.

Consolidate priorities into a single, visible backlog. Multiple teams with multiple backlogs and no unified priority list meant nobody could see the whole picture. A single prioritized backlog — even if different teams pulled from different parts of it — would force the "what matters most?" conversation that wasn't happening.

Get product owners onto the development teams. The handoff between product and development was unnecessary and expensive. BAs writing acceptance criteria in isolation with zero PO interaction was a process designed to produce misunderstandings. On a healthy team, the product owner is a team member, not a requirements vendor.

The Lesson

This company had invested in all the things you're supposed to invest in. Automated tests. Multiple teams. Release planning. Azure DevOps. Business analysts. Process documentation. From a distance, it looked like a modern software organization.

Up close, every one of those investments was working against them. The automated tests tested the wrong things. The multiple teams created dependencies instead of independence. The release planning optimized for when work started rather than when value was delivered. The business analysts produced requirements in isolation. The process documentation managed handoffs that shouldn't have existed.

The fundamental problem was flow — or rather, the complete absence of it. Nothing in the organization was designed to help work move from idea to customer quickly. Instead, everything was designed to manage the complexity that accumulated when work didn't flow. More process to coordinate more teams working on more things simultaneously. More testing to catch more bugs introduced by more context switching. More planning to predict when more features might be done despite having no empirical basis for prediction.

The most powerful thing we showed them was their own data. When you can point to a chart and say "85% of your work takes seven months or less — is that useful to your business?", the conversation changes. It stops being about whether individual developers are fast enough and starts being about whether the system is designed to let work flow at all.

Every company I've assessed that struggles with delivery speed has some version of this problem. They're not slow because their people are slow. They're slow because the system they've built — through years of individually reasonable decisions — has made speed structurally impossible. Fixing it doesn't require hiring more people or buying better tools. It requires seeing the system clearly and then making the disciplined choice to do fewer things at once, finish what you start, and shorten the distance between an idea and a customer's hands.

If your teams are working on dozens of things simultaneously but nothing seems to finish — if your release cycle includes weeks of manual testing you can't eliminate — if your best people are spending half their time on production support instead of building the future — if you have metrics for everything except whether you're actually getting faster — you might not have a productivity problem. You might have a flow problem.

That's the kind of problem I help companies see clearly.